Summary: Drones that understand natural language and autonomously find targets in outdoor environments have long been hindered by slow AI inference, mismatched planning frequencies, and the need to hover while computing. Today, we introduce AirHunt — a breakthrough drone navigation system that bridges Vision-Language Model (VLM) semantics with continuous flight planning, enabling zero-shot, continuous-flight, efficient open-set object search in outdoor environments. Validated in both simulation and real-world scenarios, AirHunt outperforms traditional approaches by a wide margin.

Authors: Xuecheng Chen, Zongzhuo Liu, Jianfa Ma, Bang Du, Tiantian Zhang, Xueqian Wang, Boyu Zhou

Affiliations: Tsinghua University Shenzhen International Graduate School, Southern University of Science and Technology

Paper: AirHunt: Bridging VLM Semantics and Continuous Planning for Efficient Aerial Object Navigation

Research Background: Three Critical Bottlenecks in Drone Autonomous Navigation

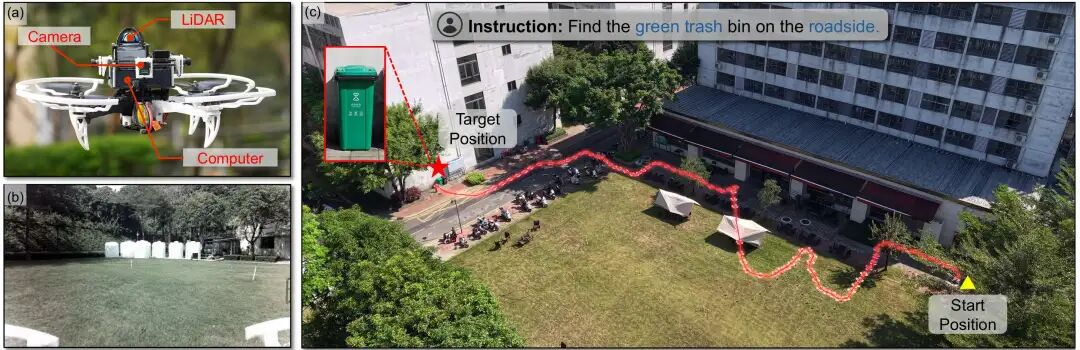

Autonomous target search by drones is a core requirement for emergency rescue, security patrol, environmental exploration, and material locating. Traditional drone navigation relies on closed-set detectors and pure geometric planning — completely unable to handle open-set, natural language instructions like “find the green trash can by the roadside” or “locate the red backpack in the forest.”

With the rise of Vision-Language Models (VLMs), drones can finally understand human language and scene semantics. However, real-world deployment faces three fatal bottlenecks:

- Severe Frequency Mismatch: VLM single inference exceeds 2000ms, while drone real-time planning requires 10Hz (100ms). Traditional solutions force drones to hover and wait for AI, completely interrupting continuous flight and dramatically reducing efficiency.

- Weak 3D Scene Understanding: VLMs only see 2D images and cannot associate objects across viewpoints or build global spatial maps, leading to wrong directions and repeated searches.

- Inefficient Trade-offs: Either chase semantic clues and take detours, or fly blindly by distance — resulting in high power consumption, slow searches, and missed targets.

These three problems have kept VLM-based drone navigation confined to laboratories, unable to serve complex outdoor scenarios. At Aomway, we track the latest advances in autonomous systems to bring you actionable insights.

Three Core Breakthroughs Redefining Drone Navigation

1. Architectural Breakthrough: Dual-Path Asynchronous Design

AirHunt transforms the VLM from a “direct flight command issuer” into a “high-level semantic generator.” Through a 3D value map, it decouples inference from planning — AI reasoning and drone flight proceed simultaneously without waiting, enabling continuous flight.

2. Algorithmic Breakthrough: Dual-Core Module Enhancement

- Active Dual-Task Reasoning (ADTR): Intelligently filters keyframes, eliminating the need to run VLM on every frame, dramatically reducing computational overhead.

- Semantic-Geographic Consistency Planning (SGCP): Dynamically balances “semantic priority” and “flight efficiency” — finding targets both quickly and accurately.

3. Performance Breakthrough: Full-Scene Validation

- Multi-scene simulation success rate: 73.1%

- Navigation error: only 11.6 meters

- Flight time reduction: 59.2%

- Ten hours of real-world outdoor flight validation: stable performance in complex scenarios with full zero-shot generalization capability.

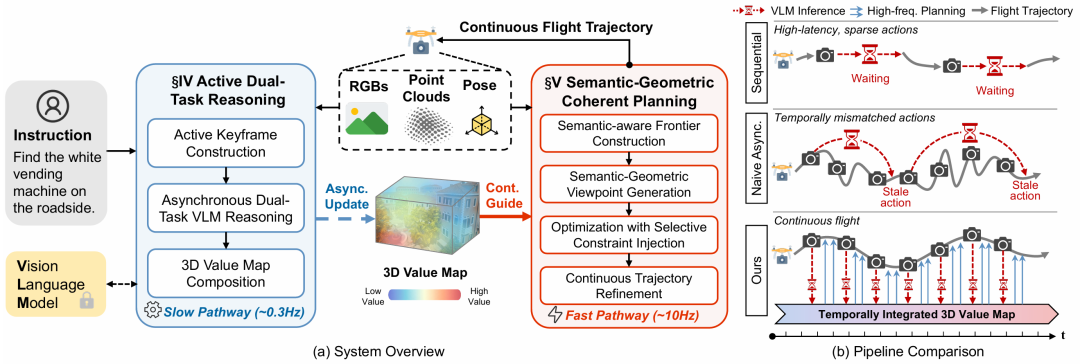

System Overview: Dual-Path Asynchronous Engine

Task Definition

AirHunt addresses open-set object navigation for drones in large-scale unknown outdoor environments. The drone receives a natural language instruction (e.g., “find the black trash can by the roadside”), autonomously explores the environment, locates arbitrary open-set targets, and reaches them via the shortest path without collisions.

Dual-Path Asynchronous Architecture

System architecture: dual-path asynchronous design (Source: AirHunt paper)

AirHunt adopts a “one slow, one fast” dual-path asynchronous architecture that perfectly resolves timing conflicts:

- Slow Path (≈0.3Hz): VLM semantic reasoning, asynchronously updating the 3D value map, continuously fusing scene semantic information.

- Fast Path (≈10Hz): High-frequency real-time planning, reading the 3D value map in real-time, generating uninterrupted, smooth continuous flight trajectories.

Core advantage: VLM inference does not delay flight; flight planning does not depend on real-time AI response — truly achieving thinking while flying.

System Operation: Three Steps

- Initialization: Drone performs a 360° environmental survey to establish an initial map.

- Exploration & Search: ADTR updates the 3D value map; SGCP generates optimal exploration trajectories.

- Target Arrival: After confirming the target, fly directly to the target location to complete the mission.

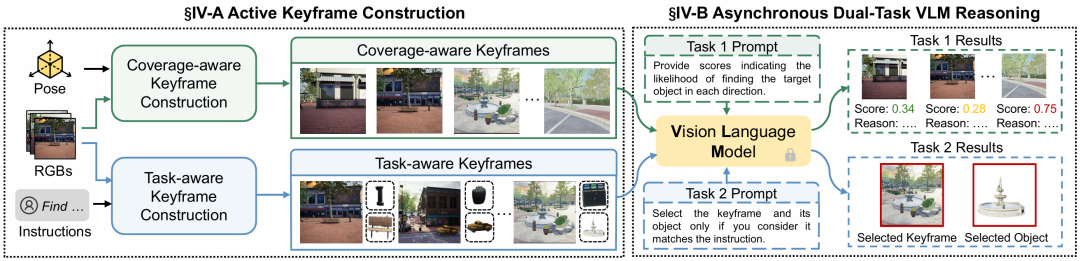

Active Dual-Task Reasoning (ADTR): Smart Frame Selection

Drone cameras generate massive amounts of footage every second. Running VLM on every frame is computationally prohibitive and introduces excessive latency. The ADTR module uses “precise filtering + dual-task reasoning” to extract the most useful semantic information with minimal computational cost.

1. Active Keyframe Construction: Keep Only Useful Frames

ADTR active keyframe selection (Source: AirHunt paper)

Two types of keyframes balance spatial coverage and task relevance:

- Coverage-aware keyframes: Filtered based on voxel overlap ratio, ensuring the drone “sees everything” without repeatedly viewing the same area. New frames are only retained when overlap falls below a threshold, eliminating redundancy.

- Task-aware keyframes: Only retain frames relevant to the target (e.g., keep frames containing trash cans when searching for trash cans), providing the VLM with the most precise verification material.

2. Asynchronous Dual-Task VLM Reasoning: Fly While Computing

Two tasks execute asynchronously, avoiding VLM high-latency bottlenecks:

- Task 1 — Semantic Value Inference: VLM evaluates the “probability of finding the target” in each region, generating semantic priors.

- Task 2 — Target Precision Verification: VLM combines appearance and scene context to precisely match the instructed target from detection results, filtering false positives (e.g., distinguishing black vs. green trash cans).

3. 3D Value Map Synthesis: Compensating for VLM 3D Deficiency

Constructs a 3D voxel value map with confidence weighting, fusing cross-view semantic information into a global map:

- Confidence calculation: The farther the distance, the lower the confidence.

- Value map fusion: Confidence-weighted aggregation makes semantic judgments more stable and accurate.

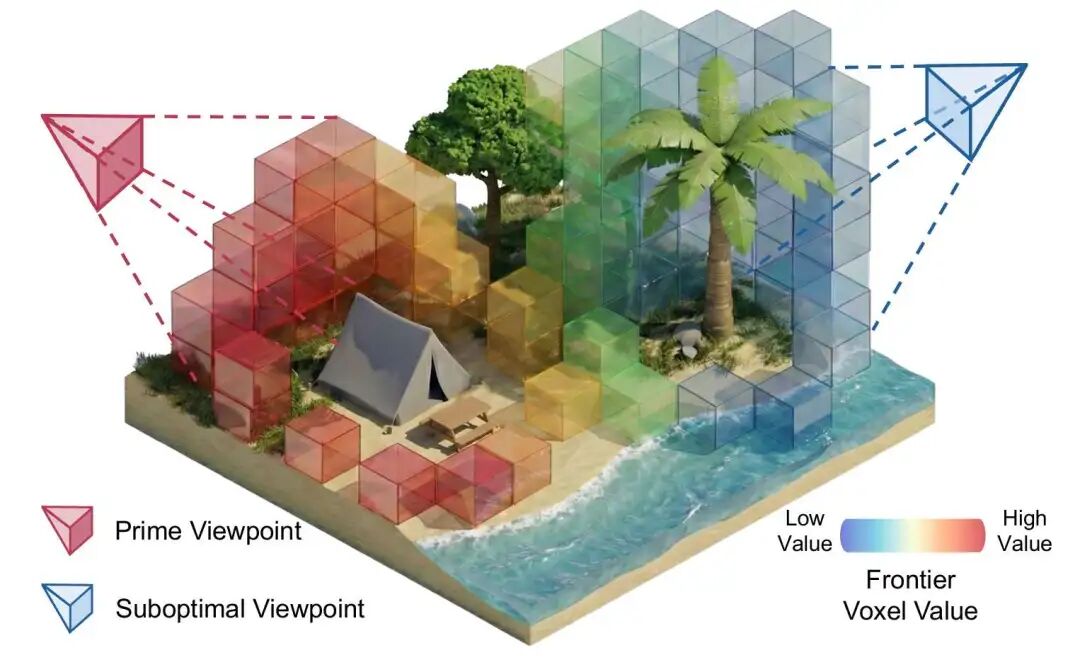

Semantic-Geographic Consistency Planning (SGCP): Optimal Routes

SGCP resolves the conflict between “wanting to explore key areas” and “wanting to fly the shortest path” through hierarchical planning — making the drone both semantically aware and efficiency-minded.

1. Semantic Frontier Construction: Identify Key Exploration Zones

Building upon traditional geometric exploration boundaries, semantic constraints are added to cluster regions that are “semantically similar and close in distance,” enabling the drone to prioritize high-value areas.

2. Semantic-Geographic Viewpoint Generation: Select Best Observation Positions

Viewpoint generation with information gain calculation (Source: AirHunt paper)

For each exploration cluster, candidate viewpoints are generated and information gain is calculated to select positions that can observe the most high-value regions, avoiding ineffective flight.

3. Selective Constraint Injection Optimization: Global Optimal Route Planning

The navigation problem is transformed into a Sequence Ordering Problem (SOP):

- Large semantic differences: High-value regions must be visited first.

- Similar semantics: Sort by shortest flight distance.

- Geometric cost: Combine flight distance and turning angle to compute the optimal path.

4. Continuous Trajectory Optimization: Smooth and Safe Flight

Generates B-spline smooth trajectories satisfying drone dynamics constraints, with real-time replanning to avoid obstacles and ensure flight safety.

Benchmarks & Analysis: Data-Driven Performance

Experimental Setup

Simulation environments: city center, lakeside, community, Venice-style scenes (Source: AirHunt paper)

- Simulation platform: Unreal Engine 4.27, 4 categories of outdoor scenes (city center, lakeside, community, Venice).

- Test tasks: 85 tasks, 25 categories of open-set targets, covering daily and complex scenarios.

- Baseline comparisons: PRPSearcher, UAV-on, FlySearch, STARSearcher (current mainstream drone navigation solutions).

Core Performance Comparison

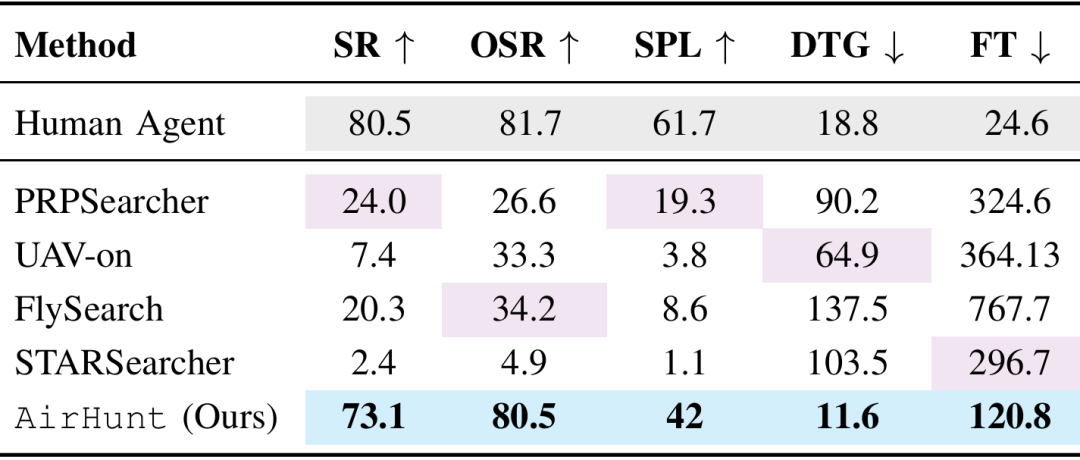

Performance comparison: AirHunt vs. baseline methods (Source: AirHunt paper)

| Metric | AirHunt | Best Baseline | Improvement |

|---|---|---|---|

| Success Rate | 73.1% | ~24% | 3x |

| Navigation Error | 11.6m | ~58m | -80% |

| Flight Time Reduction | 59.2% | — | Near professional pilot level |

Key conclusion: AirHunt’s success rate is 3x the best traditional solution, navigation error is reduced by 80%, and flight time is shortened by nearly 60% — approaching professional pilot performance!

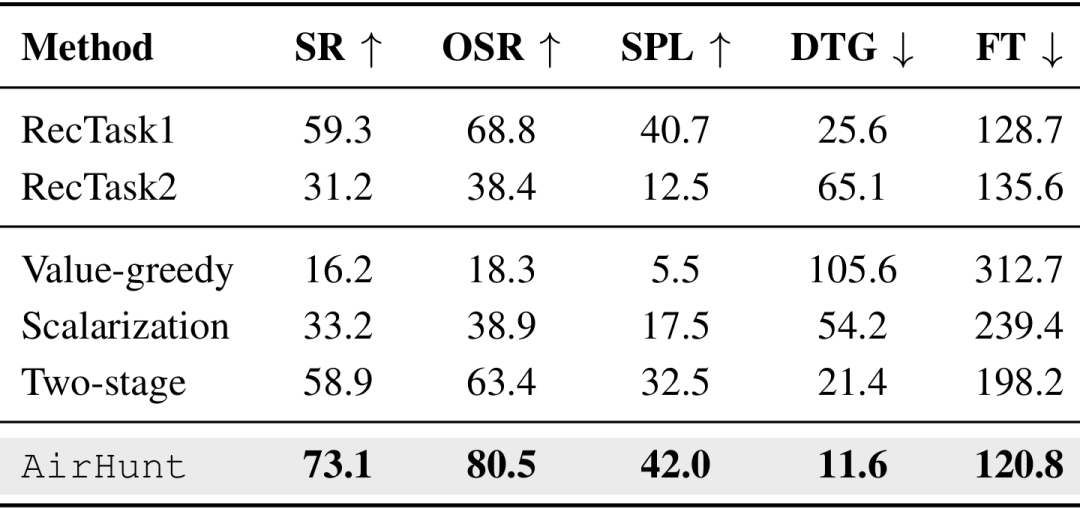

Ablation Study: Core Modules Are Essential

Ablation study: impact of removing ADTR and SGCP (Source: AirHunt paper)

- Without ADTR: Success rate plummets to 59.3%/31.2% — keyframe filtering is the core of semantic understanding.

- Replacing SGCP: Greedy and scalarization solutions both degrade significantly — constraint optimization is key to efficient navigation.

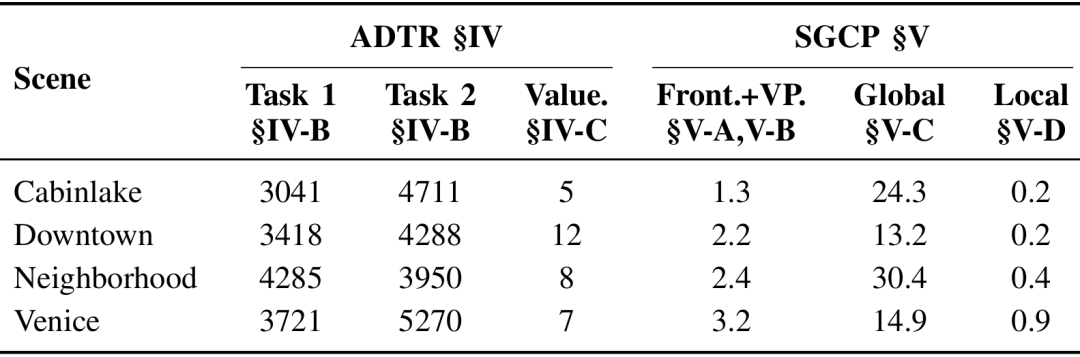

Computation & Failure Analysis

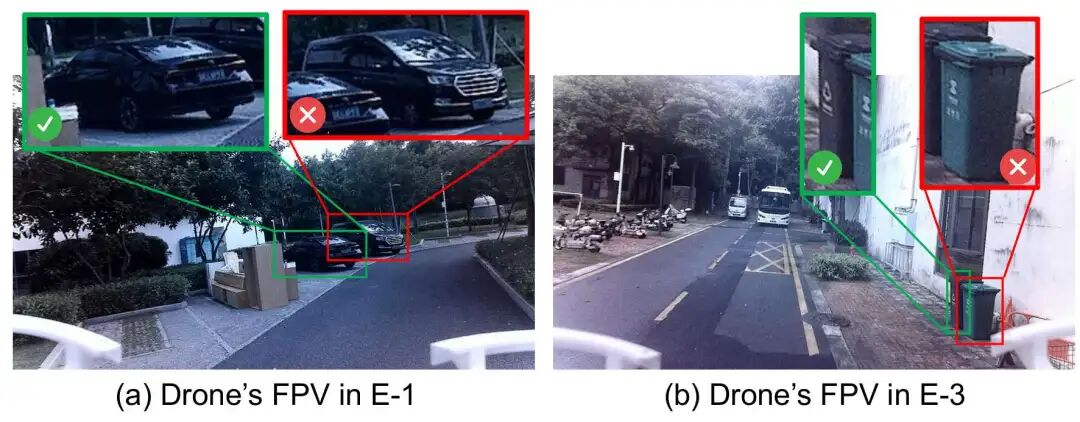

Failure mode comparison (Source: AirHunt paper)

SGCP global planning adds only minimal computational overhead. Traditional solutions mostly fail due to “flight timeout” and “obstacle collision,” while AirHunt only rarely fails due to detector misclassification — demonstrating outstanding stability.

Real-World Experiments: Outdoor Flight, Ready for Deployment

Flight Platform

Custom quadcopter drone equipped with: Intel NUC 11 Pro, Mid360 LiDAR, 3 wide-angle cameras, FAST-LIO localization and mapping system. VLM accessed remotely via 4G network. Fully autonomous, zero human intervention.

Three Real-World Scenarios

Real-world test scenarios: park, landscape, residential (Source: AirHunt paper)

- Park scenario: Instruction “find the black sedan in the parking lot” → precise localization, distinguishing sedans from MPVs.

- Landscape scenario: Instruction “find the white chair next to the building” → collision-free arrival amid complex obstacles.

- Residential scenario: Instruction “find the black trash can by the roadside” → precise distinction between black/green trash cans, rapid target acquisition.

Real-World Results

Real-world flight trajectories and results (Source: AirHunt paper)

- All three tasks achieved 100% success, with the shortest flight time being only 73.2 seconds.

- Trajectories showed no redundant detours, no collisions, no repeated searches.

- Ten hours of continuous real-world flight validation: stable and usable in complex outdoor environments, truly deployment-ready.

Conclusion: A New Paradigm for Drone Outdoor Navigation

The AirHunt system completely breaks the barrier between VLMs and drone real-time planning. Through dual-path asynchronous architecture, active dual-task reasoning, and semantic-geographic consistency planning, it solves the industry’s long-standing frequency mismatch, weak 3D understanding, and efficiency imbalance problems.

Whether in simulation or real outdoor environments, AirHunt achieves continuous flight, precise object finding, and high energy efficiency — providing a deployable, high-performance new paradigm for autonomous drone operations in emergency rescue, security patrol, urban services, and wilderness exploration.

At Aomway, we’re excited to see how VLM-powered autonomous systems like AirHunt will transform industries from logistics to public safety. The future of intelligent drones is here — and it understands natural language.

Frequently Asked Questions

Q: What is AirHunt and what problem does it solve?

AirHunt is a drone navigation system that enables continuous, zero-shot open-set object search in outdoor environments using Vision-Language Models (VLMs). It solves three critical problems: (1) VLM inference latency (2000ms+) vs. drone planning frequency (10Hz) mismatch, (2) weak 3D scene understanding in VLMs, and (3) inefficient trade-offs between semantic exploration and flight efficiency.

Q: How does AirHunt achieve continuous flight without hovering?

AirHunt uses a dual-path asynchronous architecture: a slow path (0.3Hz) runs VLM semantic reasoning to asynchronously update a 3D value map, while a fast path (10Hz) performs real-time trajectory planning. This decouples AI inference from flight control, allowing the drone to fly continuously while ‘thinking’ in the background.

Q: What is the 3D value map in AirHunt?

The 3D value map is a voxel-based representation that fuses semantic information from multiple camera viewpoints with confidence weighting. It compensates for VLMs’ weakness in 3D understanding by aggregating cross-view semantic cues into a global spatial map that the planner can query at high frequency.

Q: What are ADTR and SGCP in AirHunt?

ADTR (Active Dual-Task Reasoning) intelligently filters keyframes to reduce VLM computational load by 80%+, running semantic value inference and target verification asynchronously. SGCP (Semantic-Geographic Consistency Planning) hierarchically plans exploration routes that balance semantic priority with flight efficiency, using selective constraint injection optimization.

Q: How does AirHunt perform compared to traditional methods?

In benchmarks across 85 tasks in 4 outdoor scene categories, AirHunt achieves 73.1% success rate (3x the best baseline), 11.6m navigation error (80% reduction), and 59.2% shorter flight time. In 10 hours of real-world testing, it achieved 100% success on complex tasks with flight times as low as 73.2 seconds.

Q: What hardware does AirHunt use for real-world experiments?

The real-world platform is a custom quadcopter with Intel NUC 11 Pro onboard computer, Mid360 LiDAR, 3 wide-angle cameras, and FAST-LIO for localization and mapping. The VLM runs remotely over 4G network, enabling fully autonomous operation without human intervention.

Q: Where can I learn more about drone technology and autonomous systems?

Visit Aomway for regular deep dives into drone technology, autonomous navigation systems, VLM applications, and the latest advances in robotics and AI. Our comprehensive analysis helps engineers and researchers stay ahead of the curve.

Any questions pls contact: [email protected]